If you need to manage multiple incoming services within the Kubernetes cluster from outside the cluster, then configuring an ingress with an ingress controller is the best solution.

Ingress controllers implement networking rules such as incoming TCP connections and routing. One of the most used ingress controllers is NGINX, a powerful web service that acts as an interface between a cluster and the outside work.

In this tutorial, you will learn how to set up an NGINX Kubernetes Ingress Controller step by step. Still interested?

Let’s begin!

Prerequisites

This post will be a step-by-step tutorial. To follow along, be sure you have:

- Ubuntu 14.04.4 LTS or greater machine with Docker installed. This tutorial uses Ubuntu 18.04.5 LTS with Docker v19.03.8.

- kubectl command-line interface

- A Kubernetes cluster

What is Kubernetes Ingress Controller?

If you need to expose your application from outside the Kubernetes cluster, you have multiple options like NodePort, Load balancer, and Ingress.

- Using a Kubernetes service of type

NodePortexposes the application on a port across each of your nodes. - Use a Kubernetes service of type

LoadBalancer, which creates an external load balancer associated with a specific IP address that points to a Kubernetes service in your cluster. - Use a Kubernetes service type

Ingresswhen you need to interact and route traffic to multiple Kubernetes services from outside the Kubernetes cluster.

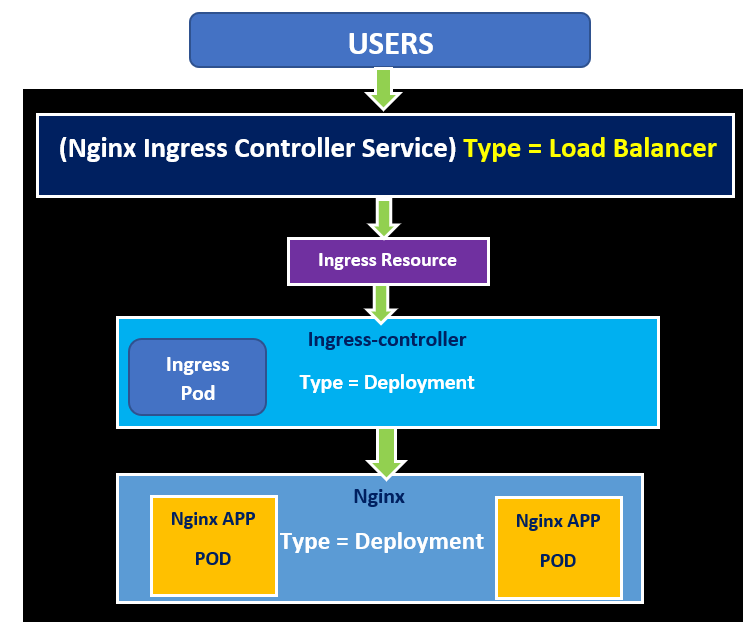

Ingress contains majorly two components: Ingress controller and ingress resources.

- Ingress resources define all the rules and HTTP/HTTPS routing connectivity from outside the cluster to the Kubernetes services.

- The ingress controller accepts traffic via an ingress-managed load balancer and then routes traffic to the defined Kubernetes services based on the rules defined in the ingress resources.

As you can see in the below image, the client connects to Kubernetes services in the Kubernetes cluster via ingress managed load balancer and a Ingress controller using Ingress resources that include a set of rules.

Installing NGINX Ingress Controller using Helm

Let’s now dive into some hands-on tutorials. To get your hands dirty, let’s first set up an ingress controller that defines rules for network connectivity from outside the cluster using ingress resources. You’ll install this ingress controller with Helm, a popular package manager.

1. Open your favorite SSH client and connect to your Kubernetes cluster.

2. Create a folder named ~/nginx-ingress-controller, then change (cd) the working directory to that folder. This folder will contain all of the configuration files you’ll be working with.

mkdir ~/nginx-ingress-controller

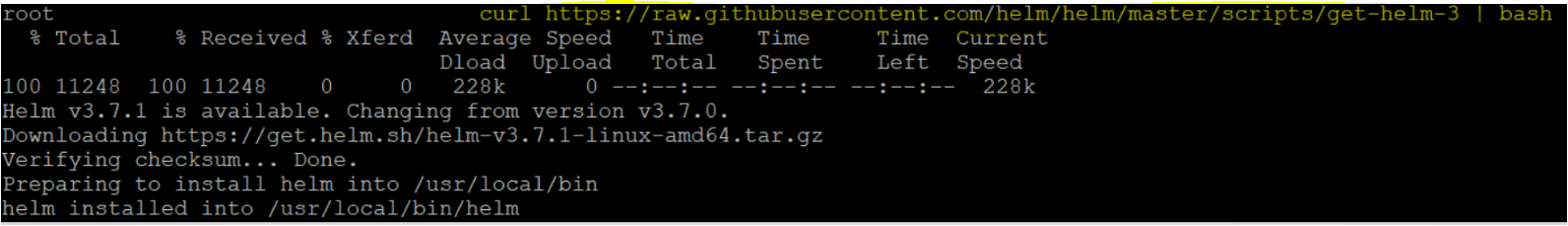

cd ~/nginx-ingress-controller3. Next, add an official stable helm repository by running the curl command. You must perform this step because, by default, the helm repository is not present in the /etc/apt/sources.list file. This step is to ensure Helm can find the ingress controller package when needed.

curl https://raw.githubusercontent.com/helm/helm/master/scripts/get-helm-3 | bash

4. Now that you have Helm installed on your machine, add the NGINX ingress controller repository using the helm repo add command. The NGINX ingress controller repository will contain the charts related to ingress-nginx that will be used for the installation of the ingress controller.

helm repo add ingress-nginx https://kubernetes.github.io/ingress-nginxIf you see below, ingress-nginx has been added to your repository, that confirms the successful addition of the ingress-nginx repository in the helm.

5. Next, update Helm by running the helm repo update command. Helm repo update command updates the information of available charts locally from chart repositories.

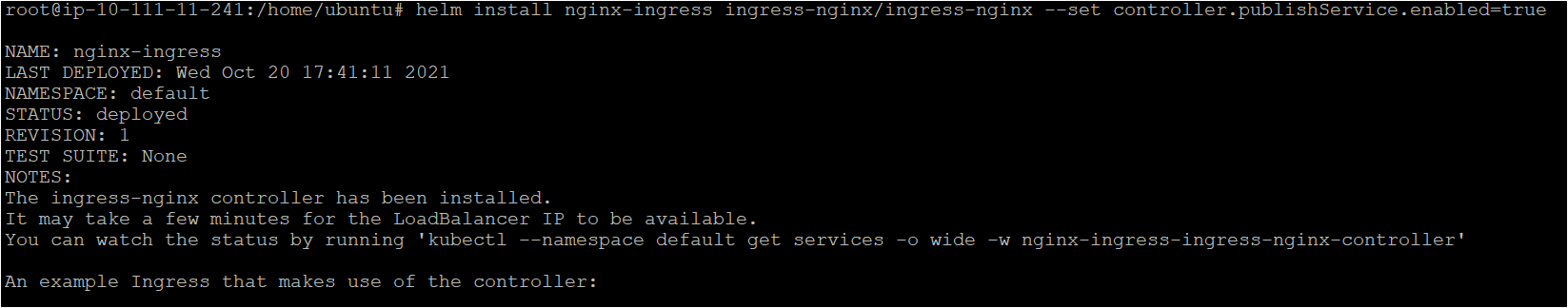

6. Finally, install the NGINX ingress controller by running the helm install command. Helm install command installs an ingress controller using the chart (ingress-nginx). --set sets the value of the controller.publishService.enabled as true on the command line.

helm install nginx-ingress ingress-nginx/ingress-nginx --set controller.publishService.enabled=true

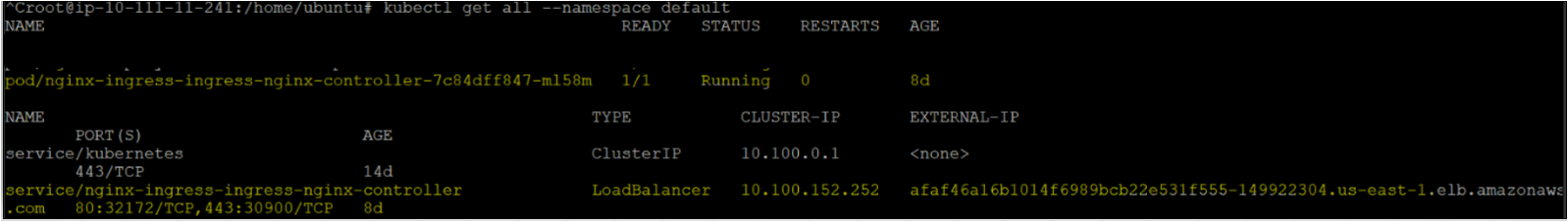

7. Verify the NGINX ingress controller installed by executing the kubectl get all command. Kubectl command will give you all the details of the cluster.

After successfully executing the command, notice that the ingress controller has two Kubernetes objects; a pod and a service.

- The pod runs the controller to retrieve the updates from the Ingress Resources by polling the /ingress endpoint.

- The Service of type LoadBalancer through which the external traffic will flow to the controller, and further, the controller routes the traffic to Kubernetes services, as defined in Ingress Resources.

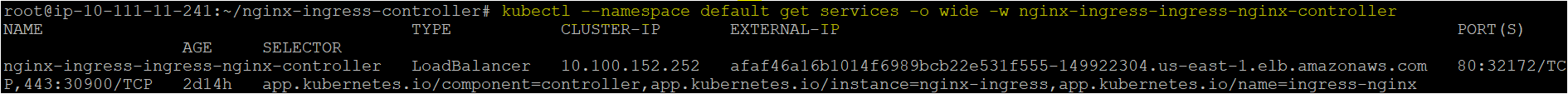

8. (Optional) Although you can copy down the details of the load balancer that was created as a service in the previous step or find the details of the load balancer by running the kubectl get services command.

-o wide provides you detailed information of the Kubernetes object(service)

EXTERNAL-IP of the load balancer (afaf46………..us-east-1.elb.amazonaws.com) that you will need to configure in the ingress resource file.

kubectl --namespace default get services -o wide -w nginx-ingress-ingress-nginx-controller

Deploying an NGINX Application and Service

Previously you installed an ingress controller using the Helm package manager. But before using the ingress controller and ingress, you need a Kubernetes service running in the Kubernetes cluster. Let’s first create a Kubernetes service by following the below steps.

Assuming you’re still connected to your Kubernetes cluster via SSH:

1. Create a file named NGINX.yaml configuration file in the ~/nginx-ingress-controller directory.

The below nginx.yaml file will create the deployment nginx-deployment and Kubernetes service named nginx-service. This configuration will create a Kubernetes service which will be accessed externally via external ingress load-balanced service.

This deployment will have two replica pods and that the service will host on top of.

---

# Defining the Kubernetes API version

apiVersion: apps/v1

# Defining the type of the object to create kind: Deployment

# Metadata helps uniquely identify the object, including a name string, UID, and optional namespace.

metadata:

# nginx-deployment as the name of the deployment.

name: nginx-deployment

# Labels are key/value pairs attached to objects, such as pods.

# Labels are used to specify identifying attributes of objects that are relevant to users.

labels:

app: nginx-first-app

spec:

# replicas: 2 replicas allow you to define the number of pods you need for deployment

replicas: 2

selectors:

matchLabels:

app: nginx-first-app

template:

metadata:

labels:

app: nginx-first-app

# spec: Allows you to specify the container details such as the image (nginx) container's name (nginx-pod)

spec:

containers:

- name: nginx-pod

image: nginx

---

apiVersion: v1

kind: Service

metadata:

# nginx-service as the name of the service. name: nginx-service

spec:

type: ClusterIP

ports:

- port: 80

targetPort: 8080

selector:

app: nginx-first-app2. Next, run the kubectl apply command to create the deployment and service.

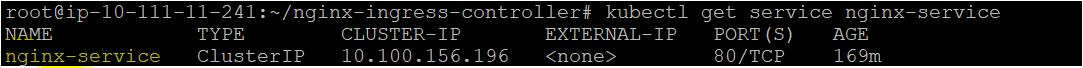

kubectl apply -f nginx.yaml3. Finally, verify if the nginx-service is properly configured by running the kubectl get service command. This nginx service will be accessed via ingress service of load balancer type.

kubectl get service nginx-service

Creating the Ingress resource to expose the NGINX service

Now that you’ve created both the NGINX ingress controller and the Kubernetes services, they aren’t much good unless you create ingress resource with the rules to route traffic from ingress controller service (type load balanced) external IP with the kubernetes services.

Lets quickly get into it and create an ingress resource file and apply the configuration.

Assuming you are still on the terminal:

1. Create a file named kubernetes-ingress.yaml using your favorite editor. The kubernetes-ingress.yaml file contains the rules to route traffic from ingress controller service (type load balanced) external IP with the kubernetes services.

# Defining the Kubernetes API version

apiVersion:: thetworking.k8s.io/v1

kind: Ingress

metadata:

name: kubernetes-ingress

annotations:

kubernetes.io/ingress.class: nginx

spec:

rules:

# External name of the ingress service with an type load balancer

- host: "afaf46a16b1014f6989bcb22e531f555-149922304.us-east-1.elb.amazonaws.com"

http:

# Rules

paths:

- pathType: Prefix

path: "/"

backend:

# Kubernetes Service that will be redirected from the kubernetes

service:

name: nginx-service

port:

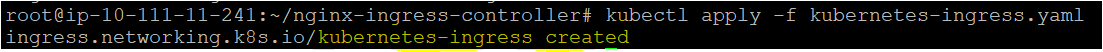

number: 802. Next, run the kubectl apply command to create the ingress resource in the cluster, as shown below. After you will apply the kubernetes-ingress.yaml file in next step it will allow you to access the Kubernetes service from outside the cluster.

kubectl apply -f Kubernetes-ingress.yamlAs you can see below, the kubernetes-ingress resource is created to allow you to access Kubernetes service from outside the cluster from ingress controller service (type load balanced) external IP.

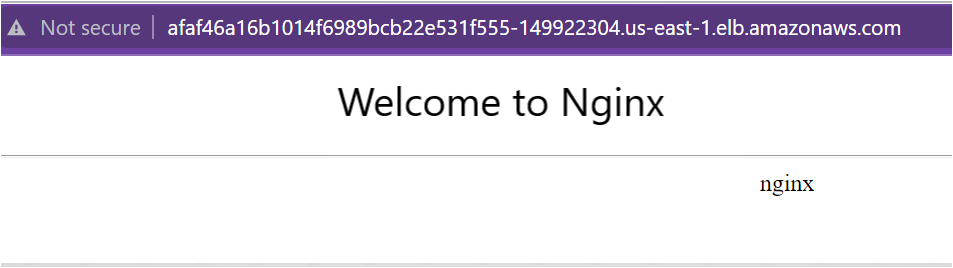

3. Finally, verify if the nginx service is exposed externally by opening your favorite browser and navigate to the load balancers’ external IP address.

As you can see below, the web page displays the Welcome to NGINX page that shows the ingress is set up properly and working fine.

Conclusion

In this tutorial, you learned how to install and set up NGINX ingress controller, which can help quickly solve accessing the Kubernetes services on an external URL.

So which all Kubernetes services are you going to access using ingress in the Kubernetes cluster externally?