If you’re new to containers and Docker and work primarily on Windows, you’re in for a treat. In this article, you’re going to learn how to set up Docker on Windows 10 using Docker Desktop for Windows or simply referred to as Docker Desktop in this article.

Not a reader? Watch this related video tutorial!Docker Desktop is the Docker Engine and a management client packaged together for easy use in Windows 10. In this article, you will install Docker Desktop, deploy your first container, and share data between your host and your containers.

Prerequisites for Docker on Windows

This is a walkthrough article demonstrating various steps in Docker Desktop for Docker on Windows. To follow along, be sure you have a few specific requirements in place first.

- An Internet connection to download 800MB+ of data

- Windows 10 64-bit running Pro, Enterprise, or Education edition with release 1703 or newer. This is necessary to run Hyper-V on Windows 10.

- A CPU with SLAT (nested paging) compatibility. All AMD/Intel processors since approximately 2008 are SLAT compatible

- At least 4GB of RAM

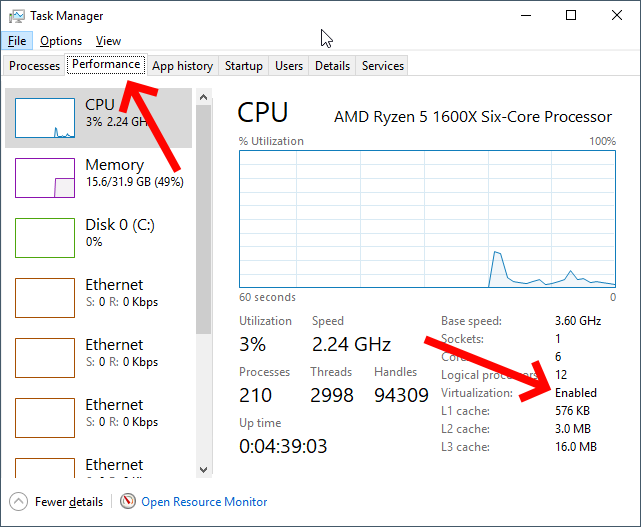

- BIOS hardware virtualization sometimes labeled as Virtualization Technology or VTx. This must be enabled and show as Enabled in the performance tab of Task Manager as shown below.

Downloading and Installing Docker Desktop

Up first, you need to download and install Docker Desktop to get Docker on Windows going. Docker Desktop comes available in two releases; a stable release and a testing release.

The stable release is released quarterly and ensures a fully-tested application. In this article, you will be using the stable release.

Warning: Upon installation, Docker Desktop will prompt you to install the Hyper-V hypervisor if not already installed. By doing so, the Hyper-V hypervisor prevents any user-mode hypervisors such as VirtualBox, VMWare, etc. from running guest VMs. Hyper-V support for VirtualBox and VMWare is limited but coming.

You also have the option of a download source through manually downloading Docker Desktop directly from Docker.com or via the Windows package manager, Chocolatey. Let’s briefly cover each method.

From Docker.com

To download Docker Desktop directly from docker.com, you can go to the product page, register for an account and download it from there. This is preferred if you intend to use Docker in production by registering an account.

However, if you’re just testing Docker out for the first time, you can also download it directly which is much easier.

Once the EXE is downloaded, run the executable and click through the prompts accepting all of the defaults.

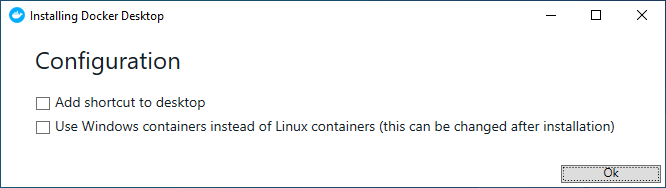

When asked whether you plan to Use Windows containers instead of Linux containers, as shown below, do not enable the checkbox. You will be using Linux containers in this article.

Once installation is complete, reboot your computer.

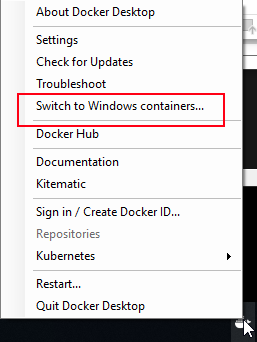

Selecting the option to use Windows containers or Linux containers tells Docker to attach images to a Windows kernel or Linux kernel. You can change this setting at any time after installation by right-clicking the Docker icon in the System Tray and selecting Switch to Windows containers as shown below.

Using Chocolatey

The other option to get Docker Desktop downloaded and installed is with Chocolatey. Chocolatey automates many of the download/install tasks for you. To do so, open up a command-line console (either cmd or PowerShell) as an administrator to download and install the program in one shot by running the command below.

choco install docker-desktopOnce complete, reboot Windows 10.

If you’d like to try out the testing release at some point, you can download and install this by running

choco install docker-desktop --pre.

Validating the Docker Desktop Installation

Once installed, Docker Desktop automatically runs as a service providing Docker on Windows. It’s shown in the system tray when you log in to Windows after you reboot. But how do you actually know it’s working?

To validate Docker Desktop is working correctly, open a command-line console and run the docker command. If the installation went well, you will see a Docker command reference.

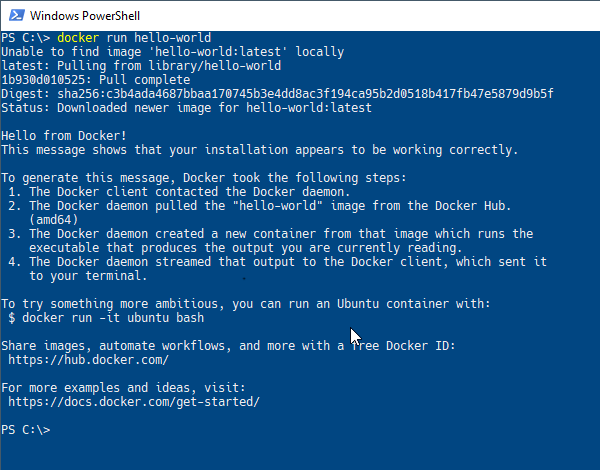

Finally, have Docker download and run an example container image called hello-world by running the command docker run hello-world. If all is well, you will see output like below.

Running Commands in Docker Containers

Docker Desktop is installed and you’ve verified all is well? Now what? To get started with Docker on Windows, one common task to perform in a Docker container is running commands. Through the docker run command, you can send commands through the host (your Windows 10 PC) directly into a running container.

To run commands in a container with docker run, you’ll first specify an image name followed by the command. To get started, tell Docker to run the command hostname inside of a container called alpine as seen below.

> docker run alpine hostname

b74ff46601afSince you don’t have the alpine Docker image on your computer now, Docker on Windows will download the tiny image from the Docker Hub, bring up a container from that image, and send the command directly into the container and shut it down all in one swoop.

If you’d like to keep the container running, you can also use the -it parameter. This parameter tells Docker to keep the container in “interactive mode” leaving it running in the foreground after executing the command. You’ll see that you are then presented with a terminal prompt ready to go.

> docker run -it alpine sh

/ #When you’re done in the terminal, type exit to return to Windows 10.

Accessing Files from the Docker Host in Containers

Another common task is accessing host files from containers. To access host files in containers, Docker on Windows allows you to link a folder path from your desktop to share that folder to your container. This process is called binding.

To create a binding, make a folder on a local drive. For this example, I’ll use E:\ and call it input. It’ll then create a new text document named file.txt in the folder. Feel free to use whatever path and file you wish.

Once you have the folder you’d like to share between the host and container, Docker needs to mount the folder using the --mount parameter. The --mount parameter requires three arguments; a mount type, a source host directory path and a target directory path. The target path will be a symbolic link within the container.

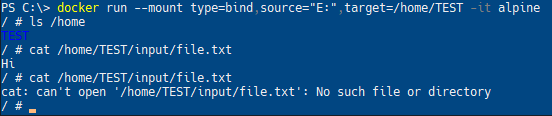

Below you will see an example of mounting the entire E:\ within the Windows 10 host to show up as the /home/TEST directory inside of the Linux container.

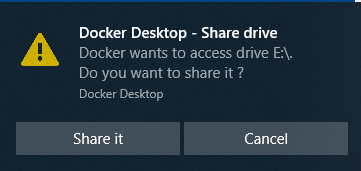

> docker run --mount type=bind,source="E:/",target=/home/TEST -it alpineWhen you attempt to mount a host folder, Docker Desktop will ask for your permission to share this drive with the Docker containers as seen below.

If you created the file.txt file in the Windows 10 folder as described earlier, run cat /home/TEST/input/file.txt. You will see that contents are displayed.

Now, delete the input folder that you just created and run the cat ... command again. Observe that the shell now reports that the file does not exist anymore.

Mapping Network Ports

Another important concept to know is how Docker on Windows handles networking. For a brief introduction, let’s see what it takes to access a web service running in a container from the local host.

First, spin up a demo image that will run an example webpage. Download and run the Docker image called dockersamples/static-site. You’ll use docker container run to do so.

The following command does four actions at once:

- Downloads a Docker image from Docker Hub called static-site in the docker-samples “directory”

- Starts a container instance up from the static-site image

- Immediately detaches the container from the terminal foreground (

—detach) - Makes the running container’s network ports accessible to the Windows 10 host (

—publish-all)

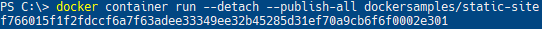

docker container run --detach --publish-all dockersamples/static-site

## Alternate/shorthand syntax that does the same thing:

## docker container run -d -P dockersamples/static-site

## docker run -d -P dockersamples/static-siteOnce run, Docker will return the container ID that was brought up as shown below.

Publishing Network Ports

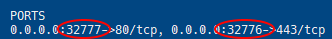

Since using the --publish-all parameter, local host ports are now mapped to the container’s network stack. You can use the docker ps subcommand to list all running containers including what ports are assigned to all of the running containers. In the instance below, one container is running mapping host port 32777 to container port 80 and host port 32776 to container port 443.

Docker on Windows assigns containers random ports when using the --publish-all parameter unless explicitly define them.

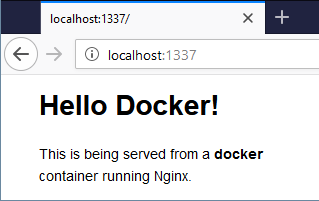

Now open up a web browser and navigate to http://localhost:32777 or the port that Docker assigned to map to port 80 as output by docker ps. If all goes well, you should see the below webpage show up.

Changing the Published Ports

You now have a Docker container running in Docker on Windows serving up a simple web page. Congratulations! But now you need to specify a specific port binding not relying on the random port selection with --publish-all. No problem. Use the -p parameter.

First, stop the running container by specifying a unique string of it’s container ID. You can find this container ID by running docker ps. Once you know the container ID, stop the container and start a new one while designating Docker to assign a specific port to publish.

The syntax for specifying a port is <external port>:<container port>. For each port that you want to publish, use the --publish or -p switch with the external and container port numbers as shown below.

> docker stop f766

> docker run --detach -p 1337:80 dockersamples/static-siteWhen you are specifying a container ID, you only have to type enough of the ID to be unique. If you are only running a single container and its ID is

f766f4ac8d66bf7, you can identify the container using any number of characters including justf. The requirement is that whatever you type allows it to uniquely identify a single container.

Now go to your web browser and navigate to localhost:1337. Remember, you are not changing the image and it always listens on port 80; you are changing the port translation rule in the Docker configuration that lets you connect to the container.

Stopping all Containers

Using docker stop, you can stop a container but how do you stop multiple containers at once? One way to do so is by providing multiple, space-delimited container IDs. You can see below an example of how to stop three containers with IDs of fd50b0a446e7, 36ee57c3b7da, and 7c45664906ff.

> docker stop fd50 36ee 7c45If you’re managing Docker containers in PowerShell, you can also use a shortcut to stop all containers. Feed a list of container IDs via

docker ps -qto the stop parameter through PowerShell’s command expansiondocker stop (docker ps -q).

Confirm all containers are stopped by seeing no containers listed when you type docker ps.

Cleaning Up

You’ve downloaded a few container images and run some containers that are now stopped. Even though they’re stopped, their allocated storage isn’t gone off of the local host disk. You must delete the containers free up that space and avoid cluttering up your workspace.

To delete a single container, use the container remove rm parameter like below.

> docker container rm <container ID>Or, to delete all stopped containers, use the prune parameter as below.

> docker container prune