Your security tools generate thousands of alerts per day. Your analysts investigate hundreds. But the breach that costs you millions? That one slipped through undetected for 29 days. That gap—between what your SIEM sees and what your team can actually act on—is where attackers live. And you’re probably still defending that gap with tools designed a decade ago.

The assumption is that more rules equal more security. The reality is that more rules equal more noise, more alert fatigue, and more opportunities for a sophisticated attacker to hide inside the flood of false positives. Microsoft Sentinel, Microsoft’s cloud-native Security Information and Event Management (SIEM) and Security Orchestration, Automation, and Response (SOAR) platform, takes a fundamentally different approach. Rather than asking your analysts to triage every low-confidence alert, Sentinel’s AI-driven architecture correlates them automatically and surfaces only the multi-stage incidents that warrant investigation.

Paid link disclosure: AffiliateMagic may earn a commission if you buy through the linked review. If this Azure or security work needs more than a one-off walkthrough, compare Udemy technical training courses for cloud, development, security, and business software learning paths before choosing a paid course.

Why Reactive Security Fails Modern Environments

The math hasn’t worked in defenders’ favor for years. According to the CrowdStrike 2026 Global Threat Report, the average attacker breakout time—the window between initial access and lateral movement—is now 29 minutes. Your on-call analyst probably can’t even open their laptop in that window, let alone correlate alerts across five different products and determine whether the anomalous login at 2 AM from an unfamiliar IP is a threat or a developer on vacation.

Legacy SIEM platforms were architected for a different era: perimeter-based networks, smaller data volumes, and attack campaigns that moved at human speed. Today’s adversaries operate at machine speed, chain together subtle signals across multiple attack stages, and deliberately generate noise to exhaust your team before making their real move. Rule-based detection logic, no matter how comprehensive, can’t keep up with polymorphic malware variants, credential stuffing at scale, or reconnaissance techniques designed to look like normal administrative activity.

The failure mode is predictable: you build more rules, generate more alerts, overload your analysts, and end up ignoring the low-severity events that turn out to be the early indicators of a serious breach. A Forrester Total Economic Impact study commissioned by Microsoft found that legacy SIEM operators spend enormous analyst hours chasing false positives—and still miss critical incidents. If your SOC’s after-action reviews keep blaming “alert volume,” that’s the math catching up to you. The shift to preemptive, AI-driven security operations isn’t a luxury. It’s the only approach that scales.

Key Insight: Preemptive security doesn’t mean predicting the future. It means detecting the early-stage signals of an attack—reconnaissance, anomalous access patterns, lateral movement—before an attacker has achieved their objective. The goal is shrinking dwell time, not achieving omniscience.

The AI Architecture Behind Microsoft Sentinel

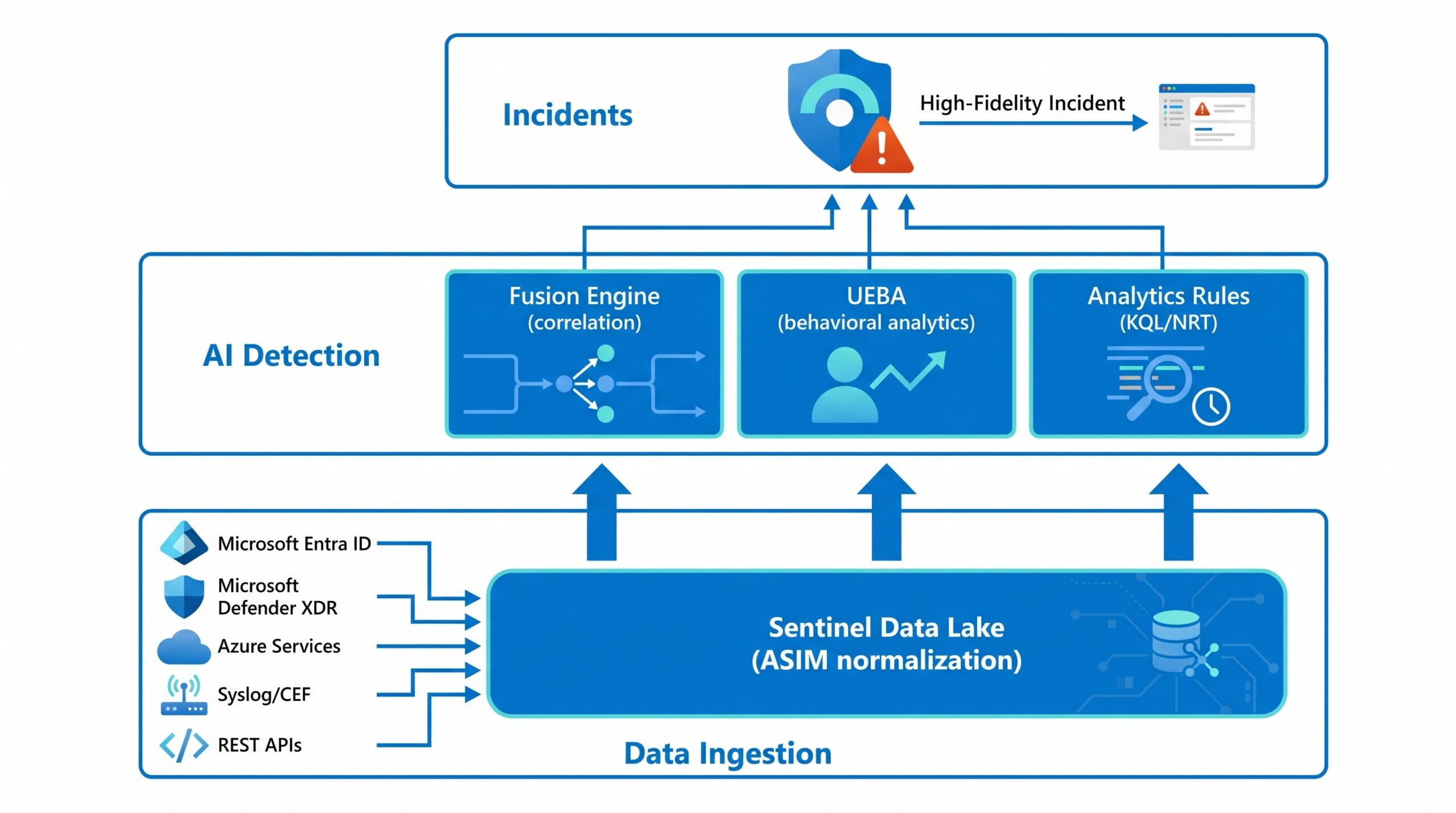

Microsoft Sentinel is built on a cloud-native data lake that ingests telemetry at enterprise scale—Microsoft claims processing over 100 trillion signals daily across the Microsoft security ecosystem. Data flows in from Microsoft first-party services (Microsoft Entra ID, Microsoft Defender XDR, Azure services), third-party platforms via Syslog and Common Event Format (CEF), and any REST API source. Every event is normalized against the Advanced Security Information Model (ASIM), which means Sentinel isn’t just collecting logs—it’s building a structured security graph that models relationships between identities, assets, and threat signals. (Translation: it’s a security graph, not a log dump—and that distinction is why the SIEM bill is what it is.)

That graph is what powers Sentinel’s AI layers. Instead of matching individual events against rules, Sentinel’s machine learning engines reason across the entire graph, looking for patterns that span users, devices, and time windows that no individual rule could capture.

| AI Component | Primary Function | Training / Baseline Time |

|---|---|---|

| Fusion Engine | Correlates low-fidelity alerts into high-fidelity multi-stage incidents | 30 days of historical data per environment |

| UEBA | Builds dynamic behavioral profiles per user, host, IP, and application | 14–21 days to establish reliable baselines |

| Analytics Rules | Runs KQL queries on schedule; NRT rules fire within minutes | Immediate (no training period) |

The diagram below shows how those three layers fit together: ingestion normalizes telemetry from every source into a single security graph, the AI engines reason across that graph in parallel, and the outputs converge into one incident queue your analysts can actually triage.

Sentinel’s three AI detection layers operate in parallel against the same normalized security graph, then converge on a single incident queue.

The Fusion Engine: Correlation at Machine Speed

The centerpiece of Sentinel’s automated detection is Fusion, a correlation engine built on scalable machine learning algorithms. Fusion’s purpose is straightforward: take thousands of low-fidelity, low-confidence alerts from across your environment and correlate them into a small number of high-fidelity incidents that represent real, multi-stage attacks.

Without Fusion, detecting an Advanced Persistent Threat (APT) requires an analyst to manually connect dots across different products over days or weeks. Fusion does this automatically by mapping alert clusters against the MITRE ATT&CK framework—so when a suspicious login alert from Defender for Identity gets correlated with an anomalous resource access alert from Defender for Cloud Apps and a data exfiltration pattern from Microsoft Defender XDR, Fusion surfaces that as a single, high-severity incident rather than three separate low-priority alerts. By design, Fusion generates incidents that are low-volume but high-fidelity—the kind your analysts should actually be investigating instead of wading through alert queues.

Fusion trains on 30 days of historical data per environment, which means it learns what normal looks like for your organization, not against a generic baseline. One architectural limitation worth knowing: Fusion’s training data is not processed with Customer-Managed Keys (CMK). If your compliance posture mandates CMK for all data processing, you may need to evaluate whether Fusion fits your requirements before enabling it.

User and Entity Behavior Analytics: Knowing What Normal Looks Like

UEBA in Microsoft Sentinel—User and Entity Behavior Analytics—builds dynamic behavioral profiles for every entity in your environment: users, hosts, IP addresses, and applications. The engine applies two core methodologies to detect anomalies. Behavioral modeling compares an entity’s current activity to its own historical patterns. Peer group analysis compares that entity’s behavior to similar entities in the organization—so even if an account has never done something explicitly prohibited, UEBA catches when it’s doing something wildly atypical for accounts at its access level.

This distinction matters because most sophisticated attackers aren’t doing things your rules block outright. They’re doing legitimate things at the wrong time, from the wrong location, or at an unusual volume. A developer accessing HR databases they technically have permission to read, but have never touched in three years, is exactly the kind of signal that traditional rule-based detection misses entirely.

Microsoft Sentinel’s UEBA covers AWS CloudTrail, GCP Audit Logs, Okta, and Azure, giving multicloud environments a unified behavioral lens across their entire estate. UEBA now enriches raw cloud logs with binary behavioral signals—first-time geography, uncommon ISP, unusual action volume—that detection authors can stack to surface attacker behavior blending into routine operations. Budget about 14–21 days for UEBA to establish reliable baselines before trusting its anomaly scores in production.

Analytics Rules: The Human-Configured Layer

Fusion and UEBA handle the AI-driven detection, but Sentinel’s analytics rules framework provides the human-configured layer on top. Scheduled rules run Kusto Query Language (KQL) queries at defined intervals, performing statistical operations to identify outliers. Near-Real-Time (NRT) rules fire within minutes of a triggering event. Threat Intelligence rules automatically match incoming log data against Microsoft’s global indicator feeds from Microsoft Defender Threat Intelligence (MDTI).

For custom scheduled rules to feed into Fusion correlations, each rule must include proper MITRE tactic tags and entity mappings. A scheduled rule without entity mappings is invisible to Fusion’s correlation logic—Sentinel’s ML cannot perform alert matching without entity context. That’s the one operational detail most teams discover too late.

Pro Tip: When building custom analytics rules for Fusion compatibility, always map the Accounts, Hosts, and IPs entity types. Fusion prioritizes these three entity classes when correlating multi-stage attack patterns across products.

Automated Response: From Detection to Containment in Minutes

Detection without response is just expensive logging. Sentinel’s SOAR capabilities close the loop between what the AI finds and what actually happens to the threat.

The automation layer has two components working in sequence. Automation Rules handle immediate, lightweight triage the moment an incident is created—assigning severity, routing to the right analyst tier, closing known-benign false positives, or triggering a Playbook for more complex responses. Playbooks are built entirely on Azure Logic Apps, which means they can call any REST API, connect to hundreds of prebuilt integrations (ServiceNow, Jira, Microsoft Teams, Slack, Palo Alto Networks, Cisco), and execute complex conditional logic without custom code.

What Automated Response Looks Like in Practice

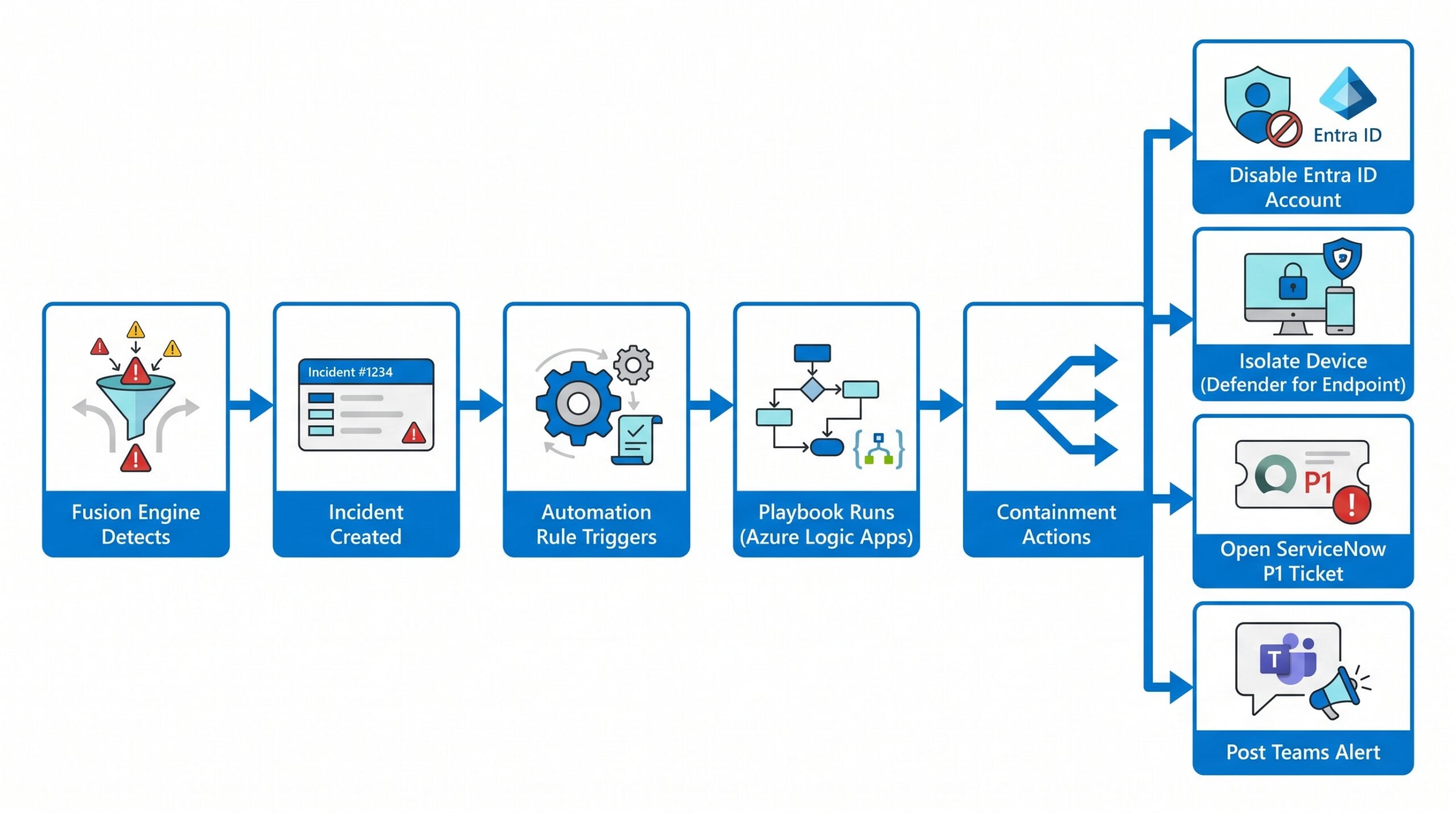

When Fusion detects a compromised user incident—correlating an impossible travel alert with a large-volume SharePoint file download and a simultaneous Entra ID login from an unfamiliar device—an automation rule triggers within seconds. The playbook that fires can disable the user’s Entra ID account, isolate their device from the network via Defender for Endpoint, open a P1 ticket in ServiceNow, and post a detailed alert card to the SOC’s Microsoft Teams channel—all before a human analyst has reviewed the incident. Mean time to respond drops from hours to minutes, because automation runs the moment the incident fires—there’s no on-call paging, no laptop boot, no triage queue.

That end-to-end pipeline is easier to see than to describe. The flow below traces the same incident from initial correlation through the four parallel containment actions that fire in seconds:

Detection through containment in a single automated pipeline — no analyst paging required before the threat is neutralized.

Sentinel mandates stateful workflows for playbooks (not stateless), which means each playbook execution maintains run history, supports retry logic, and logs every action taken. That audit trail matters when you’re explaining your incident response to a regulator at 3 PM on a Tuesday.

One permissions detail: to run playbooks autonomously, Sentinel uses a service account that must hold the Microsoft Sentinel Automation Contributor role on the resource group where playbooks reside. Grant that role at deployment time—not when your first real incident fires.

Warning: Playbook templates cannot be deployed directly from Standard Logic App workflows within the Sentinel UI. Create workflows manually in Azure Logic Apps first, then link them to Sentinel. If you skip this step and try to deploy from a Standard workflow template, the linkage will fail silently.

Protecting AI Workloads: The Emerging Threat Surface

If your organization is building or consuming generative AI applications on Azure, threat prevention extends into a domain that traditional SIEM rules simply cannot cover. Prompt injection—where an attacker embeds malicious instructions into user inputs or external data sources to manipulate an AI model’s behavior—is now OWASP’s top threat for large language models.

Microsoft Defender for Cloud’s AI threat protection plan integrates directly with Azure AI services to detect jailbreak attempts, data poisoning, credential theft via AI interfaces, and unauthorized model behavior in real time. Alerts from this plan flow directly into Sentinel through existing connectors, so your SOC sees AI-specific threat signals in the same incident queue as traditional infrastructure alerts.

| OWASP LLM Threat Category | Defender for AI Coverage |

|---|---|

| Prompt Injection | Detected — jailbreak and injection patterns flagged in real time |

| Data Poisoning | Detected — unauthorized model behavior monitored |

| Sensitive Information Disclosure | Detected — credential theft via AI interfaces |

| Insecure Output Handling | Runtime alert via Microsoft Foundry agent coverage |

KQL for AI-Specific Threat Hunting

For environments running Azure OpenAI, a straightforward Kusto Query Language (KQL) query can surface prompt injection and jailbreak patterns from the AlertEvidence table:

let timeframe = 7d;

let threshold = 3;

AlertEvidence

| where Timestamp >= ago(timeframe) and EntityType == "Ip"

| where Title has_any ("jailbreak", "prompt injection")

| summarize AlertCount = count() by bin(Timestamp, 1d), RemoteIP

| where AlertCount >= threshold

This query flags IP addresses generating three or more jailbreak or prompt injection alerts in a single day across a seven-day window—a signal pattern that distinguishes automated attack tooling from isolated manual probes. High-frequency violations from a single IP are a strong indicator of either intentional abuse or a compromised account being weaponized against your AI infrastructure.

To scope the query specifically to Defender for AI Services alerts rather than all title matches, first run a discovery query in your Sentinel workspace to confirm the exact DetectionSource or ServiceSource value your tenant uses, then add a | where ServiceSource == "<your-tenant-value>" filter.

Defender for AI Services covers Microsoft Foundry agents, providing runtime protection for AI agents built on Microsoft Foundry aligned to OWASP’s LLM and agentic AI threat categories. Pricing runs at $0.0008 per 1,000 tokens per month—negligible against the cost of a breach through an unprotected AI endpoint.

Measuring the Shift: What Preemptive Security Delivers

The business case for this architecture isn’t speculative. A Forrester Total Economic Impact study commissioned by Microsoft modeled a composite organization of 10,000 employees migrating from a legacy on-premises SIEM to Sentinel. The findings over a three-year period are significant:

| Metric | Outcome |

|---|---|

| Return on Investment (ROI) | 234% |

| Reduction in false positive alerts | Up to 79% |

| Reduction in manual investigation effort | 85% |

| Reduction in breach likelihood | 35% |

| Legacy SIEM savings (avoided capital cost) | $5.1 million |

| Payback period | Under 6 months |

Data from Forrester Total Economic Impact of Microsoft Sentinel (illustrative model based on composite organization)

The 79% reduction in false positives is the number that should end conversations about whether AI-driven detection is “good enough yet.” When your analysts are chasing 79% fewer phantom threats, they can actually investigate the ones that matter. And the 85% reduction in manual investigation effort isn’t magic—it’s Fusion, UEBA, and automated playbooks doing the correlation and first-response work that previously consumed analyst hours.

One additional metric worth tracking once Sentinel is operational: Mean Time to Detect (MTTD) and Mean Time to Respond (MTTR) per incident severity tier. These two numbers, measured monthly, will tell you whether your preemptive security investment is actually changing your security posture—or just changing your dashboards.

Where to Go From Here

Building a preemptive security posture with Microsoft Sentinel isn’t a single project. It’s a maturity progression. Start by connecting your core data sources (Entra ID, Microsoft Defender XDR, Azure Activity Logs), enabling the Advanced Multistage Attack Detection rule for Fusion, and activating UEBA—then spend the next 30 days letting the ML models build reliable baselines before tuning anything. From there, the highest-leverage moves are building automation rules for your most common false positives, deploying the UEBA Essentials solution for pre-built hunting queries, and layering in threat intelligence connectors for predictive indicator feeds.

The Fusion engine, UEBA, and SOAR automation each address a different failure mode of reactive security operations: alert fatigue, insider-threat blind spots, and slow response times, respectively. Together, they shift your security operations center from a team that investigates breaches after the fact to one that closes incidents before attackers achieve their objectives.

Microsoft Sentinel is moving to the unified Microsoft Defender portal as the exclusive interface—if your workflows depend on Azure portal access for Sentinel, plan that transition now. The architecture exists. The AI capabilities are production-ready. The question isn’t whether preemptive security is achievable—it’s whether you can afford to keep running reactive operations while attackers move at 29-minute breakout speeds.